The new digital networks and their AI management by design

(on the proposal for a Digital Networks Act – DNA)

Alexandre Veronese [Professor of University of Brasília, Key external member of Jean Monnet Centre of Excellence “Digital Citizenship and Technological Sustainability” (CitDig), University of Minho] and Alessandra Silveira (Editor of this blog, Coordinator of CitDig, University of Minho)

The Digital Networks Act (DNA) proposal has been adopted on 21 January 2026, aiming to create a simplified and more harmonised legal framework, because an advanced digital infrastructure is critical for enabling the adoption of Artificial Intelligence (AI), cloud, space and other innovative technologies. The idea behind the proposal is that a cutting-edge digital infrastructure is fundamental for Europe’s economy and society.[1] However, this leads us to think critically about what is or is not desirable about digital networks.

Some academic concepts suffer from social translation into catchphrases. Some become real slogans. Two are important for the Internet debate. The first is Jonathan Zittrain idea of a generative Internet.[2] The second is network neutrality. Both are very helpful to think about the Internet that we all want. On the other hand, technology is, to some extent, overtaking them in practice. The same is happening with the legal frameworks. The evolution of digital networks is currently corroding old ideas with new features. New digital networks aim to be faster and resilient. This is why AI is being incorporated into their design.

After all, for there to be near-real time communication, it is necessary to discriminate applications according to their functionality. No one would consider it reasonable for autonomous cars to have high latency and, therefore, generate accidents. The same goes for online surgeries and surgeries with robotic assistants. This leads to the management and slicing of Internet traffic through AI technologies – and implies that digital networks make exclusively automated decisions on a permanent basis. This text draws attention to the implications of this association between digital networks and AI technologies on the fundamental rights recognised by EU law.

Many authors try to establish some degree of fundamental rights’ protection by drawing on the concept of “by default”. One might consider the idea of data protection by default, legal protection by default, privacy by design, and so many others. The idea consists of an intelligent use of Lawrence Lessig’s “Code”, which encompasses not only software, but hardware as well.[3] For the author, the “Code” could embed some safeguards in new technologies. Ever since the concept of “Lex Informatica” by Joel Reidenberg,[4] legal literature has been relying on the promise that social and legal mechanisms can impose a desirable level of protection. Nonetheless, technology is proving more difficult to tame as developments arrive. A new wave of regulatory theories and tools are emerging to cope with those new issues. The most problematic to this day is AI.

One thing is certain: AI is already part of everyday life in other parts of the world.. Quantum computing is likely to be a turning point in the future. But issues relating to AI are the new challenge of the moment. Let us consider a preliminary observation on the concept. AI is a very broad term. It is an umbrella concept that includes a wide range of techniques: machine learning, deep learning, generative AI, natural language processing, neural networks, and many others. The variation and combination of techniques allow developers to produce programs and machines with very specific purposes. A deep learning process was responsible for the victory of a machine against a human in the “Go” contest. But the very same machine would require special treatment to win another kind of game. Generative AI may be useful to process large amounts of data and provide good answers.; the limitation is the dataset. If the data inputs are not reliable, the outputs will not be either. It is true that AI is smart for specific tasks and inept at others. It is also true that an AI system is only as good as the data it processes. The question is whether some AI systems could ever overcome such limitations. Right now, no solution to this problem is on the horizon, despite the huge amount of resources invested. However, given that AI offers a new perspective on digital networks, and shows us that the buzzwords are going down faster than we think, we must remain vigilant and creative regarding the legal framework to protect fundamental rights.

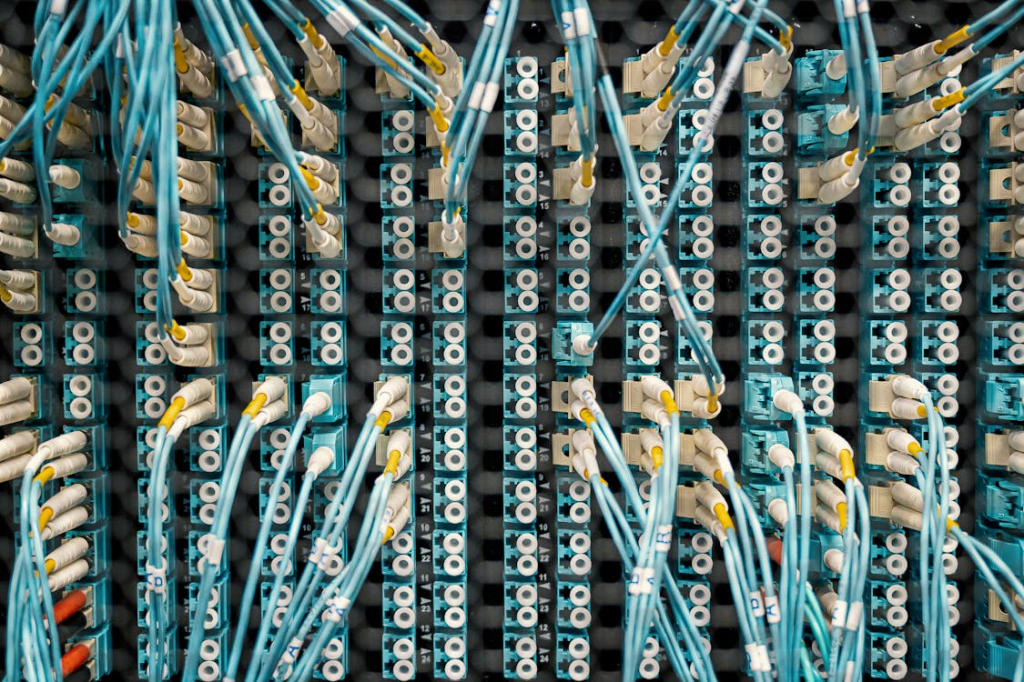

The new digital networks are going to use more AI to enhance their capabilities. The new 5G already uses AI applications to improve their quality. The full digitalisation of the networks is driving them to mesh themselves with cloud computing and highly capable processing. The data centres are not simple storage. They play an important role in the networks. One can see a circular trend on the move: better infrastructure for transportation (on-shore e off-shore fibre optics cables, satellites and microsatellites), better data centres with more processing power, and better applications to manage the systems. The goal is to reach a near real-time data processing. These technological improvements seem inevitable. The 6G is also being developed and it will only accelerate the circular trend.

The Jonathan Zittrain’s idea of a generative Internet is very interesting. One can say that he foresaw the necessity of a strong social and technical oversight. His book about the dire possibilities of the Internet’s future enlightens the importance of having technical standards for safeguarding rights. He indicates that a powerful technical community could turn the risks into social benefits. However, we are talking about giant enterprises that are under enormous pressure. On one hand, they must continue to grow and generate profits. On the other hand, they must adhere to compliance rules in many jurisdictions. This creates a race to the top in AI investments. If a big enterprise does not invest sufficiently in AI and new technological edges, it may lose the race. And what if it must choose to take on more risks to accelerate? From this point of view, the idea that the technical community will prevent damage seems naïve. The most probable scenario is a situation where these technical personnel will enlist themselves to work for the big companies. They will collaborate in generating systems for the companies’ profits and not necessarily for the welfare of the global society. It is not possible to empathise with Jonathan Zittrain’s point of view. The real question is whether the dark future of the Internet will become reality or not. Generative AI is troublesome, despite being very useful. It does not create anything new in the human sense. It compiles data to provide outputs. Its technical ability of compilation evolves, but without more input, it may stop providing answers. The solution is quite simple: human knowledge needs to improve to provide more information. When a blog post is made available on the Open Internet, it becomes yet more input. That is exactly the reason why those big corporations are still paying people to create videos and to produce material, although some do it for free. When such flow dries up, the generation of output will crumble.

The second concept is the idea of network neutrality.[5] In its origin, it was a great concept, as its core idea was to block infrastructure companies from evaluating data traffic and deciding which one had priority. This traffic slice could ruin a new company and empower another. From the infrastructure companies’ point of view, prioritising certain data was necessary for two reasons. The first was traffic congestion. Many heavy users were allegedly drawing infrastructure resources and damaging other users. The second was the efficiency of data transportation. Without separating traffic and capacities, there would be a waste of a lot of bandwidth. This loss would benefit no one: neither the heavy user, nor the occasional one. At first, the United States Federal Communications Commission (FCC) approved rules in 2010 banning traffic discrimination. However, the FCC order came down in 2015. Those FCC decisions always went under long judicial battles, but the struggles were always around infrastructure providers against other companies. In 2024, the FCC reestablished the network neutrality only to view a very recent judicial decision strike it down in 2025 and set back the liberty of the infrastructure enterprises.[6] The question that remains open is whether this concept makes sense when the infrastructure changes to an AI control know as data slicing, which discriminates data traffic by default?

Concepts turn into slogans and policies and eventually fade away. New ones must take their places. However, why do such concepts rise and fall? The answer is relatively simple: the technical features of the networks are always changing. AI is at the centre of those rearrangements nowadays. The new digital networks will use AI to perform tasks to empower the networks. This is their new design. AI will integrate themselves into the systems to organise traffic. A big company will not lose the opportunity to produce systems and maximise profits. On the same track, it will not struggle against a pattern that is designed to rule the future of the infrastructure. Data slicing is a key feature of the new digital networks, and it erodes the idea of neutrality. The real question is how we can regulate systems that will be so flexible. Their continuous automated decisions will imply a lot of externalities. Some are positive, in that they will enable us to have faster and better networks. The bad news is that some EU rules against automated decisions and discrimination could be overruled by the new digital networks. The worse news is that the users may not even know about this.

The big question we are faced with is how to reconcile the technological innovation of the digital networks themselves with the prohibition of subjection to exclusively automated individual decisions [see Article 22 General Data Protection Regulation (GDPR), Judgment CJEU SCHUFA, etc.).[7] When faced with the question “Are there restrictions on the use of automated decision-making?”, the official website of the European Commission provides the following answer: “Yes, individuals should not be subject to a decision that is based solely on automated processing (such as algorithms) and that is legally binding or which significantly affects them. A decision may be considered as producing legal effects when the individual’s legal rights or legal status are impacted (…). In addition, processing can significantly affect an individual if it influences their personal circumstances, their behaviour or their choices.”[8]

However, as it has already been explained by computer engineers, in the processes of exploration and mining of large data sets via data mining and machine learning, any decision that does not require any human control to extract the outputs inferred by a learning agent is considered to be exclusively automated. This is why the GDPR requires that the effects on the legal sphere of the data subject be more than trivial, otherwise the learning algorithms would be left without the raw material to evolve – and technological development would be compromised.[9] To this extent, some computer engineers raise many doubts about the feasibility of the provisions of the GDPR on this matter, because the fuzzy logic that underlies AI systems would not allow the average person to understand the inference process. The processing operations within AI make use of analytical models whose approximate predictions externalise fuzzy arguments that accept different degrees of truth (almost, maybe, somewhat) and not just the distinction between truth and falsehood.[10]

In any case, when AI enters this equation, the prohibition of subjection to exclusively automated individual decisions requires additional caution. For example, any AI system falling within Annex III of the AI Act that profiles natural persons should always be classified as a high-risk system under Article 6 (3), in fine, of this Regulation – with all the requirements that this classification implies. In the same vein, Article 86 of the AI Act provides for the right to explanations about individual decisions taken based on the results of a high-risk AI-system under Annex III of the AI Act. Any natural or legal person whose fundamental rights are affected by a decision – taken by the deployer of an AI system and based on the results of that high-risk system – has the right to obtain clear and relevant explanations about (i) the main elements of that decision, and (ii) the role that the AI system played in the decision-making process.

In any event, we reiterate: Articles 6(3) and 86 of the AI Act are only applicable to AI systems referred to in Annex III to the AI Act. The question that arises is whether the systems used for traffic management in digital networks would be covered by these provisions. Perhaps as safety components of critical infrastructure (point 2 of Annex III to the AI Act), but even so, the doubt remains, as it is unclear whether traffic management can be tied to the safety of the AI system, except in limited cases such as if it is necessary to prevent overloads or system failures.

Since it appears that there is not a specific provision of the AI Act addressing the application of AI in the management of digital networks, the new regulation on digital networks would have to address this issue in a consistent manner, as well as the issue of differentiating personal data from non-personal data in the context of traffic management. In this context is important to ascertain whether the information used in traffic management qualifies as personal data – in which case, the application of Article 22 of the GDPR to such management would pose an additional problem.

The right to explanations in Article 86 of the AI Act undoubtedly represents a step forward compared with the indeterminate concepts of Article 22 GDPR regarding exclusively automated decisions – the interpretation of which, until the Judgment SCHUFA, remained secretive. And, strictly speaking, some dimensions remain so, because although the CJEU has recognised the prohibition of subjection to exclusively automated decisions, the application of this prohibition remains uncertain, considering the exceptions provided for in Article 22 (2) GDPR, as well as the difficulties in delimiting its scope.[11] The SCHUFA judgment was certainly an important step towards limiting the exploitation and monetisation of data inferred from the Internet user’s digital footprint – but the CJEU certainly knows that this is a battle of David versus Goliath with the outcome still open to question.[12] And now EU law will have to accommodate the interests not only of digital platforms but also those of digital networks themselves – and a whole new chapter is opening.

[1] European Commission, The Digital Networks Act, https://digital-strategy.ec.europa.eu/en/policies/digital-networks-act.

[2] Jonathan L. Zittrain, “Law and technology. The end of the generative internet”, Communications of the ACM, 52(1)(2009): 18-20, https://doi.org/10.1145/1435417.1435426.

[3] Lawrence Lessig, Code: and other laws of cyberspace, version 2.0 (Penguin Books, 2006).

[4] Joel R. Reidenberg, ”Lex informatica: The formulation of information policy rules through technology”, Texas Law Review 76 (1997-98), https://ir.lawnet.fordham.edu/cgi/viewcontent.cgi?article=1041&context=faculty_scholarship.

[5] Tim Wu, ”Network neutrality, broadband discrimination“, Journal on Telecommunication & High Technology Law 2 (2003), https://scholarship.law.columbia.edu/cgi/viewcontent.cgi?article=2282&context=faculty_scholarship.

[6] Cecilia Kang, ”Net neutrality rules struck down by appeals court”, The New York Times, 2 January 2025, https://www.nytimes.com/2025/01/02/technology/net-neutrality-rules-fcc.html.

[7] Alessandra Silveira, “Finally, the ECJ is interpreting Article 22 GDPR (on individual decisions based solely on automated processing, including profiling)”, The Official Blog of UNIO – Thinking & Debating Europe, 10 April 2023, https://officialblogofunio.com/2023/04/10/finally-the-ecj-is-interpreting-article-22-gdpr-on-individual-decisions-based-solely-on-automated-processing-including-profiling/); “Automated individual decision-making and profiling [on case C-634/21—SCHUFA (Scoring)]”, UNIO – EU Law Journal, 8(2) (2023), https://doi.org/10.21814/unio.8.2.4842); “On inferred personal data and the difficulties of EU law in dealing with this matter”, Official Blog of UNIO – Thinking & Debating Europe, 19 March 2024, https://officialblogofunio.com/2024/03/19/editorial-of-march-2024/.

[8] See European Commission, https://commission.europa.eu/law/law-topic/data-protection/rules-business-and-organisations/dealing-citizens/are-there-restrictions-use-automated-decision-making_en.

[9] Alessandra Silveira, “Finally, the ECJ is interpreting Article 22 GDPR (on individual decisions based solely on automated processing, including profiling)”.

[10] César Analide and Diogo Morgado Rebelo, “Inteligência artificial na era data-driven, a lógica fuzzy das aproximações soft computing e a proibição de sujeição a decisões tomadas exclusivamente com base na exploração e prospeção de dados pessoais”, Fórum de proteção de dados, Comissão Nacional de Proteção de Dados, No. 6 (2019), Lisbon.

[11] Proof of this is the misinterpretation that the European Commission continues to make of the rights resulting from Article 22 (3) GDPR – in disagreement with recital 71 GDPR (which expressly refers to “in any case”) and SCHUFA Judgment (recitals 65 and 66) –, as if the European and national legislator were not bound by those rights. The Commission’s official website states that “Except where such decision-making is based on a law, the individual must be at least informed of i) the logic involved in the decision-making process, ii) their right to obtain human intervention, iii) the potential consequences of the processing and iv) their right to contest the decision.” See European Commission, https://commission.europa.eu/law/law-topic/data-protection/rules-business-and-organisations/dealing-citizens/are-there-restrictions-use-automated-decision-making_en.

[12] Alessandra Silveira, “On rebalancing powers in the digital ecosystem in recent CJEU case law (or on the battle between David and Goliath)”, Official Blog of UNIO – Thinking & Debating Europe, 31 October 2024, https://officialblogofunio.com/2024/10/31/on-rebalancing-powers-in-the-digital-ecosystem-in-recent-cjeu-case-law-or-on-the-battle-between-david-and-goliath/.

Picture credit: by Brett Sayles on pexels.com.